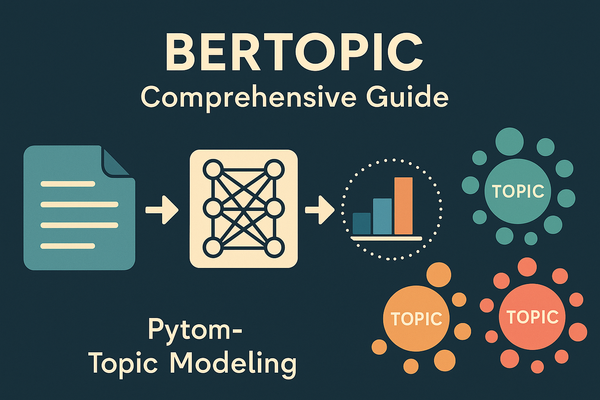

Leveraging LLMs in Recommendation Systems

Discover personalized content effortlessly with LLM-powered recommendation systems!

The advent of Large Language Models (LLMs) in recommendation systems represents a fundamental shift in the way people engage with content suggestions. LLMs have gone beyond conventional recommendation techniques to interpret user preferences with previously unheard-of precision. Through the examination of user interactions and textual data, LLMs are able to reveal complex patterns in user behavior, which opens the door to highly customized suggestions based on individual preferences and interests.

Recommendation systems and LLMs combined revolutionize the user experience by providing a smooth and simple method of finding material. This combination improves suggestion accuracy while also fostering a deeper comprehension of user intent, which results in more fulfilling and engaging content discovery trips.

We'll be covering 2 research papers which explore this realm and finally conclude with an example of how to leverage LLMs into a movie recommendation system with its code.

Here we go!

Survey of LLM for Recommendations

We begin by looking at a survey on the use of Large Language Models (LLMs) in recommendation systems, we can organize it into the following topics:

Introduction to LLMs in Recommendation Systems

Large Language Models (LLMs) have emerged as game-changers in the realm of recommendation systems, significantly enhancing the way users interact with personalized content. By leveraging vast amounts of data through self-supervised learning, LLMs excel in capturing nuanced contextual information and understanding user queries, item descriptions, and other textual inputs more effectively than ever before.

One of the key advantages of incorporating them into recommendation systems is their ability to tap into extensive external knowledge encoded within them. This enables them to establish correlations between items and users based on a rich understanding of language and context.

Moreover, LLMs have the potential to address the common challenge of data sparsity in traditional recommendation systems. With their capability for zero/few-shot recommendations, they can generalize to unseen candidates by drawing upon their pre-training with factual information, domain expertise, and common-sense reasoning.

To provide a structured understanding of LLM-based recommendation systems, researchers have categorized these models into two major paradigms: Discriminative LLM for Recommendation (DLLM4Rec) and Generative LLM for Recommendation (GLLM4Rec). While DLLMs focus on enhancing recommendation quality through techniques like fine-tuning and prompt tuning, GLLMs bring a new dimension to the table with their ability to generate personalized and context-aware recommendations.

Modeling Paradigms and Adaptation Strategies:

In this section, we dive deeper into the three modeling paradigms that are commonly used in recommendation systems (RS) when leveraging large language models (LLMs):

- LLM Embeddings + RS: This approach regards LLM as a feature extractor, where the inputted items and users' features are fed into the LLM, and the resulting embeddings are used as input for a traditional RS model. This modeling paradigm is suitable when the LLM is not designed for direct recommendation purposes. By employing LLM embeddings, the RS model can capture higher-level semantic information and make more informed recommendations.

- LLM Tokens + RS: In this paradigm, the input sequence consists of the profile description, behavior prompt, and task instructions. LLM generates tokens based on the inputted items and users' features, which capture potential preferences through semantic mining. The generated tokens are then integrated into the decision-making process of a recommendation system. This approach is particularly useful when the LLM is trained on a vast corpus of text data, allowing it to generate informative recommendations.

- LLM as RS: In this modeling paradigm, the LLM is viewed as a powerful recommendation system directly generating recommendations. The input sequence usually consists of item descriptions and user profiles, and the output sequence provides a ranked list of recommended items. This approach eliminates the need for additional interaction between the LLM and the RS model, making it more computationally efficient. However, it requires careful tuning of the LLM's hyperparameters to ensure accurate recommendations.

Different methods to adapt LLMs for task-specific applications

The image shows a diagrammatic representation of four different methods to adapt LLMs for task-specific applications:

- Fine-tuning Specific Loss of Task A: This involves taking a pre-trained LLM and updating its parameters (or fine-tuning it) while it’s being trained on Task A’s specific dataset. This tailors the model’s outputs to better suit the nuances of the specific task – for instance, recommending products based on user behavior or aligning movies to viewer preferences.

- Prompting / In Context Learning: This is a subtler form of adaptation where an LLM is given a prompt that contains some inline instruction or context. The model uses this guidance to generate outputs relevant to the task without any changes to the model parameters. For example, by giving an LLM contextual clues about a user's preferences, it can better predict what movies, books, or products to recommend.

- Prompt Tuning: Here, the input can be either hard (fixed) or soft (more flexible) prompts that slightly alter the model's behavior without substantial computational overhead. It trains the model to respond to these prompts with output relevant to a specific task (e.g., recommendation), by adjusting only a minimal set of parameters linked to the prompts, not the whole model.

- Instruction Tuning: Finally, you have a more ambitious approach where the LLM is fed explicit instructions and is expected to handle multiple tasks (B,C, D,...). The language model not only generates outputs based on these instructions but does so while being aware of different objectives it’s trained on, effectively cross-learning from multiple contexts.

Challenges and Insights in LLM-based Recommendation Systems:

Advancements in artificial intelligence have paved the way for Large Language Models (LLMs) like BERT and GPT series to revolutionize recommendation systems. These systems are at the heart of how users discover content and products, from streaming services to online shopping.

Challenges in Harnessing the Full Potential of LLMs

- Model Bias: The inherent position bias in LLMs could skew the recommendation towards popular items or higher-ranked inputs. This requires more nuanced solutions than the current random sampling techniques.

- Fairness Bias: These models often reflect the inherent biases present in their training data which could result in unfair assumptions about user demographics affecting the quality and ethicality of recommendations.

- Adaptation to User and Item Representation: A key to a successful recommendation system lies in accurately modeling users and items using diverse features. LLMs often represent these entities simplistically, which might not capture their complexities fully.

Prospective Abilities and Solutions:

- Zero/Few-Shot Learning: They show a promising direction in addressing the cold start problem, which involves providing recommendations with limited historical interaction data.

- Explainability: Generative LLMs have demonstrated aptitude in offering comprehensible explanations beyond mere recommendations, enhancing user trust and engagement.

The Future of Recommendation with LLMs

There is still a lot of room for growth in the investigation of LLMs in recommendation systems. Subsequent systems may integrate stronger moral principles that guarantee equity, responsibility, and openness. Richer, real-time customisation could result from multidimensional inputs as computer capabilities advance. Recommendation systems have already benefited greatly from the use of large language models.

Although there are many obstacles and restrictions in the way of making the best use of these models, there is also a path that leads to creative applications, offering a rich environment for further study. LLM developments indicate not only the development of technology but also the vision of increasingly sophisticated AI tools that are focused on the needs of humans.

LlamaRec: Two-Stage Recommendation using Large Language Models for Ranking

Introduction

Recommendation systems are vital in industries like e-commerce, entertainment, and content streaming, enhancing user experience by offering personalized recommendations. Traditional recommender systems, however, encounter challenges in handling large-scale data and providing real-time suggestions. They often rely on historical interactions, limiting adaptability to changing preferences and diverse recommendations. Additionally, they struggle with inefficient inference, especially with sizable datasets.

To address these challenges, LlamaRec presents a novel framework leveraging large language models (LLMs) for ranking-based recommendations. It introduces a two-stage approach that combines LLMs' language understanding and generation capabilities with efficient ranking techniques. Unlike traditional methods reliant on autoregressive generation, LlamaRec optimizes efficiency by transforming user history and candidate items into text prompts for the LLM.

Technical Deep-Dive into LlamaRec

It includes several components:

- LlamaRec introduces a two-stage recommendation framework, comprising retrieval and ranking stages, to optimize recommendation efficiency and accuracy.

- In the retrieval phase, LlamaRec employs LRURec, a sequential recommender optimized for autoregressive training, to efficiently generate potential candidate items. This initial stage effectively narrows down the item pool, ensuring that only relevant candidates proceed to the ranking phase.

- In the ranking stage, LlamaRec leverages LLM, specifically Llama 2, to perform fine-grained ranking among the retrieved candidates. The unique aspect of LlamaRec lies in its use of a predefined text prompt, combining user history and candidate items, to guide the LLM's inference process. By incorporating instruction tuning and a carefully designed prompt template, LlamaRec maximizes the LLM's understanding of user preferences while minimizing computational overhead.

- Moreover, LlamaRec utilizes a verbalizer approach to transform LLM outputs into probability distributions over candidate items, ensuring efficient ranking without extensive autoregressive generation. The image contains a organized list with 'The Godfather: Part II' from the year 1974, 'Private School' from 1983, 'Real Genius' from 1985, and 'Angels in the Outfield' from 1994. These films are categorized or presented in a hierarchical order, denoted by the letters (A) through (D). "LLM & Head" is a language processing model associated for analyzing their scripts or related textual data.

Performance and Efficiency Evaluation of LlamaRec

Table 1: Main Recommendation Performance

This table showcases the evaluation of different recommendation models across three datasets: ML-100k (movies), Beauty (beauty products), and Games (video games). The models evaluated here are NARM, BERT (BERT4Rec), SAS (SASRec), LRU (LRURec), and LlamaRec.

- M@k, N@k, and R@k stand for different evaluation metrics used, such as Mean Reciprocal Rank (MRR), Normalized Discounted Cumulative Gain (NDCG), and Recall, with k indicating the cut-off ranks of 5 and 10.

- The values (scores) are performance measures on these metrics, with bold indicating the best result and underlined indicating the second-best result for each metric-model combination.

- LlamaRec generally performs the best across almost all metrics, signifying its superiority over other methods.

Table 3: Recommendation Performance on the Valid Retrieval Subset

This table is similar to Table 1 but specifically focuses on a 'valid retrieval subset'. In other words, it concentrates on the scenario where the ground truth item (the correct recommendation) is within the top 20 ranked items retrieved by the recommender system.

Table 4: Recommendation Performance compared to LLM based baseline models

LlamaRec outperforms traditional sequential recommendation models like NARM, SASRec, BERT4Rec, and LRURec, as well as other LLM-based methods like P5, PALR, GPT4Rec, RecMind, and POD, across various benchmark datasets. With consistently superior results in metrics such as MRR, NDCG, and Recall, LlamaRec demonstrates its efficacy in providing accurate and personalized recommendations compared to existing approaches.

In terms of efficiency, LlamaRec's one-pass forward approach significantly reduces inference time and computational overhead compared to traditional generation-based LLM methods. By leveraging a simple verbalizer and optimized retrieval process, LlamaRec ensures swift ranking without compromising recommendation quality.

Overall, LlamaRec's superior performance and efficiency hold promising implications for various industries reliant on recommendation systems. Its ability to combine accuracy with speed makes it a valuable asset for delivering personalized content in real-time, thereby enhancing user experience and engagement across diverse platforms and applications.

The approach that we would be following:

The overall approach looks like the following;

- We start by asking the Language Model (LLM) to create a summary of a user's interests, focusing on the movies they've liked. We provide the titles of these movies in descending order of preference, allowing the LLM to understand the user's tastes better. This summary ("A") captures the essence of what genres, themes, or styles the user enjoys in movies.

- After obtaining the summary, we use an ALS (Alternating Least Squares) model to generate movie recommendations specifically tailored to the user. These recommendations are based on the preferences of similar users in the dataset. To ensure we don't suggest movies the user has already enjoyed, we filter out any titles present in their liked movie list. Let the recommendations be called ("B").

- Finally, we construct another prompt for the LLM, this time including both the "A" and "B". We instruct the LLM to rank the recommended movies according to how well they align with the user's interests, as reflected in their profile. The LLM then provides a ranked list of the recommended movies, ranging from the most relevant to the least relevant, based on the user's established preferences.

Code

Most of our code is referenced from the article below, please read through it to have a better understanding!

We shall cover a brief explanation of the code of the common section from the article:

import os

import time

import openai

from pyspark.sql import SparkSession

from pyspark.sql.functions import col, sqrt, lit, pow

from pyspark.ml.recommendation import ALS

# Initialize Spark session

spark = SparkSession.builder.appName('recommendation').getOrCreate()

# Load datasets

movies = spark.read.csv("/sparkdata/movies.csv", header=True)

ratings = spark.read.csv('/sparkdata/ratings.csv', header=True)

links = spark.read.csv("/sparkdata/links.csv", header=True)

tags = spark.read.csv("/sparkdata/tags.csv", header=True)

# Cast the ratings DataFrame columns to the correct data types

ratings = ratings.select(

"userId", "movieId", "rating"

).withColumn('userId', ratings['userId'].cast('int')

).withColumn('movieId', ratings['movieId'].cast('int')

).withColumn('rating', ratings['rating'].cast('float'))

# Train-test split

train, validation, test = ratings.randomSplit([0.6, 0.2, 0.2], seed=0)

# Define the RMSE function

from pyspark.sql.functions import col, sqrt

def RMSE(predictions):

squared_diff = predictions.withColumn("squared_diff", pow(col("rating") - col("prediction"), 2))

mse = squared_diff.selectExpr("mean(squared_diff) as mse").first().mse

return mse ** 0.5

# Define the GridSearch function

from pyspark.ml.recommendation import ALS

def GridSearch(train, valid, num_iterations, reg_param, n_factors):

min_rmse = float('inf')

best_n = -1

best_reg = 0

best_model = None

# run Grid Search for all the parameter defined in the range in a loop

for n in n_factors:

for reg in reg_param:

als = ALS(rank = n,

maxIter = num_iterations,

seed = 0,

regParam = reg,

userCol="userId",

itemCol="movieId",

ratingCol="rating",

coldStartStrategy="drop")

model = als.fit(train)

predictions = model.transform(valid)

rmse = RMSE(predictions)

print('{} latent factors and regularization = {}: validation RMSE is {}'.format(n, reg, rmse))

# track the best model using RMSE

if rmse < min_rmse:

min_rmse = rmse

best_n = n

best_reg = reg

best_model = model

pred = best_model.transform(train)

train_rmse = RMSE(pred)

# best model and its metrics

print('\nThe best model has {} latent factors and regularization = {}:'.format(best_n, best_reg))

print('traning RMSE is {}; validation RMSE is {}'.format(train_rmse, min_rmse))

return best_model

# Train the model

ranks = [6, 8, 10, 12]

reg_params = [0.05, 0.1, 0.2, 0.4, 0.8]

final_model = GridSearch(train, validation, 10, reg_params, ranks)- Initialization: Firstly, we initialize a Spark session and loads several datasets: movies, ratings, links, and tags.

- Data Preparation: The ratings dataset is cleaned and converted to the correct data types. Then, the ratings data is split into three subsets: training, validation, and test data.

- Model Training: The code defines a function called

GridSearchto perform a grid search over different combinations of model parameters. It iterates over various values for the number of latent factors (n_factors) and the regularization parameter (reg_param). For each combination of parameters, it trains an ALS model on the training data and evaluates its performance on the validation data using Root Mean Squared Error (RMSE). - Model Evaluation: The function tracks the best model based on the lowest RMSE on the validation set. After completing the grid search, it prints out the best model's parameters and its training and validation RMSE.

- Execution: The code then calls the

GridSearchfunction with predefined ranges for the number of latent factors and the regularization parameter. It trains the ALS model using the training data and validates it on the validation set to find the best combination of parameters. - Result: Finally, it prints out the best model's parameters and its training and validation RMSE.

# For a single user example

user_id = 12

user_ratings = ratings.filter(ratings.userId == user_id)

liked_movies = user_ratings.join(movies, 'movieId').orderBy('rating', ascending=False)

# Fetch recommendations

not_rated_by_user = movies.join(user_ratings, user_ratings.movieId == movies.movieId, 'left_anti')

not_rated_by_user = not_rated_by_user.withColumn('userId', lit(user_id))

not_rated_by_user = not_rated_by_user.withColumn('movieId', not_rated_by_user['movieId'].cast('int'))

# Transform using the final model

recommendations = final_model.transform(not_rated_by_user)

recommendations_ordered = recommendations.orderBy('prediction', ascending=False)This code snippet is for generating movie recommendations for a single user based on their previous ratings using the trained ALS recommendation model. Here's a simplified explanation:

- Filtering User Ratings: It selects all the ratings given by a specific user (identified by

user_id) from the ratings dataset and sorts them in descending order based on the ratings. - Fetching Liked Movies: It joins the user's ratings with the movies dataset to get details about the movies the user has rated. These movies are sorted by rating in descending order to show the user's liked movies first.

- Generating Recommendations: It identifies movies that the user has not yet rated by performing a left anti-join between all movies and the user's rated movies. Then, it assigns the user's ID to these unrated movies to create a new dataset.

- Transforming with the Model: It applies the final ALS recommendation model (

final_model) to the unrated movies dataset to predict the ratings that the user might give to each movie. - Ordering Recommendations: It sorts the predicted ratings in descending order to provide recommendations starting with the movies predicted to be most liked by the user.

In summary, this code segment helps recommend movies to a specific user by leveraging their past ratings and the trained recommendation model to predict which unrated movies they might enjoy.

# Setup LLM client

openai.api_key = 'esecret_***.........'

client = openai.OpenAI(

base_url = "https://api.endpoints.anyscale.com/v1",

api_key = openai.api_key

)

This code snippet sets up the client for interacting with the Anyscale API. Here's a simplified explanation:

- Setting API Key: It assigns the API key required for authentication to access the services. (Login into Anyscale website to receive API keys and other necessary credentials!)

- Initializing Client: It initializes the client for accessing the API, specifying the base URL and API key. The base URL points to the endpoint for the Anyscale services, and the API key is used for authentication to access these services.

In summary, this code segment prepares the necessary credentials and configuration to communicate with the API for further interactions and requests.

# Construct LLM message for user's liked movies

liked_movies_titles = liked_movies.select('title').rdd.flatMap(lambda x: x).collect()

print(liked_movies_titles)

liked_movies_message = f"Generate a user profile based on the following liked movies: {', '.join(liked_movies_titles)}."

print(liked_movies_message)

# Send liked movies prompt to LLM service

response_liked_movies = client.chat.completions.create(

model="meta-llama/Llama-2-70b-chat-hf",

messages=[{"role": "user", "content": liked_movies_message}],

temperature=0.7

)

#Print completion texts for liked movies

print("LLM Response for Liked Movies:")

for completion in response_liked_movies.choices:

print(completion.message.content)

#Construct LLM prompt for movie recommendations ranking

recommendation_titles = recommendations_ordered.select('title').limit(10).rdd.flatMap(lambda x: x).collect()

print(recommendation_titles)

recommendations_prompt = f"Based on the USER_INTEREST_PROFILE, please rank the following recommended movies from most relevant to least relevant for the user: {', '.join(recommendation_titles)}."

# Send recommendations prompt to LLM service

response_recommendations = client.chat.completions.create(

model="meta-llama/Llama-2-70b-chat-hf",

messages=[{"role": "user", "content": recommendations_prompt}],

temperature=0.7

)

# Print completion texts for recommendations ranking

print("\nLLM Response for Recommendations Ranking:")

for completion in response_recommendations.choices:

print(completion.message.content)- Constructing Liked Movies Prompt: This part collects the titles of movies that the user has liked from the DataFrame, formats them into a single string message, and prints it. The message is structured to prompt the language model to generate a user profile based on the provided liked movies.

- Sending Liked Movies Prompt to LLM Service: Here, the constructed prompt message is sent to the LLM service using the

client.chat.completions.create()function. This function sends a request to the LLM model specified in themodelparameter, with the provided prompt message. - Printing Liked Movies Response: After receiving a response from the LLM service, this part of the code iterates through the generated completions (possible responses) and prints them out. Each completion represents a potential user profile generated by the LLM model based on the liked movies prompt.

- Constructing Recommendations Prompt: Similar to the first step, this section gathers the titles of recommended movies, formats them into a prompt message, and prints it. The message prompts the LLM model to rank the recommended movies based on the provided user interest profile.

- Sending Recommendations Prompt to LLM Service: Just like before, the constructed prompt message is sent to the LLM service using the

client.chat.completions.create()function. This time, the request asks the LLM model to rank the recommended movies based on the user's interest profile. - Printing Recommendations Response: After receiving the responses from the LLM service, this part of the code iterates through the completions and prints them out. Each completion represents a ranked list of recommended movies generated by the LLM model based on the user's interest profile.

Output

['First Knight (1995)', 'Circle of Friends (1995)', 'Junior (1994)', 'Emma (1996)', 'Shine (1996)', 'Titanic (1997)', "'burbs, The (1989)", "She's All That (1999)", '10 Things I Hate About You (1999)', 'Never Been Kissed (1999)', 'Ghostbusters II (1989)', 'Romeo and Juliet (1968)', 'Love Actually (2003)', 'Notebook, The (2004)', 'Pride & Prejudice (2005)', 'Twilight (2008)', 'Little Women (1994)', 'Gone with the Wind (1939)', 'Billy Elliot (2000)', 'What Women Want (2000)', 'Clueless (1995)', 'First Wives Club, The (1996)', 'Big Daddy (1999)', 'Sweet Home Alabama (2002)', 'Four Weddings and a Funeral (1994)', 'So I Married an Axe Murderer (1993)', 'Groundhog Day (1993)', 'Deep Impact (1998)', 'Splash (1984)', 'Miracle on 34th Street (1994)', 'Beavis and Butt-Head Do America (1996)', 'Karate Kid, Part II, The (1986)']

Generate a user profile based on the following liked movies: First Knight (1995), Circle of Friends (1995), Junior (1994), Emma (1996), Shine (1996), Titanic (1997), 'burbs, The (1989), She's All That (1999), 10 Things I Hate About You (1999), Never Been Kissed (1999), Ghostbusters II (1989), Romeo and Juliet (1968), Love Actually (2003), Notebook, The (2004), Pride & Prejudice (2005), Twilight (2008), Little Women (1994), Gone with the Wind (1939), Billy Elliot (2000), What Women Want (2000), Clueless (1995), First Wives Club, The (1996), Big Daddy (1999), Sweet Home Alabama (2002), Four Weddings and a Funeral (1994), So I Married an Axe Murderer (1993), Groundhog Day (1993), Deep Impact (1998), Splash (1984), Miracle on 34th Street (1994), Beavis and Butt-Head Do America (1996), Karate Kid, Part II, The (1986).

LLM Response for Liked Movies:

Sure, based on the movies you've listed, here's a possible user profile:

Name: Sarah

Age: 30-40

Gender: Female

Location: United States, possibly the Northeast or Midwest regions

Occupation: Marketing professional or elementary school teacher

Interests: Romantic comedies, period dramas, teen movies, and classic romances. Enjoys light-hearted, feel-good films with strong female leads and witty dialogue. Also appreciates movies with memorable soundtracks and catchy musical numbers.

Personality: Sentimental, optimistic, and family-oriented. Values close relationships and enjoys watching movies that explore themes of love, friendship, and personal growth. Appreciates a good laugh and doesn't mind a little cheese in her movies.

Background: Sarah grew up in a small town in the United States and has a strong affection for classic Hollywood films. She studied English literature in college and worked as a teacher before transitioning to a career in marketing. She's married with two children and enjoys spending her free time watching movies, reading, and practicing yoga.

Favorite actors/actresses: Julia Roberts, Meg Ryan, Tom Hanks, Hugh Grant, Sandra Bullock, and Leonardo DiCaprio.

Favorite directors: Nancy Meyers, Nora Ephron, Rob Reiner, and James Cameron.

Favorite genres: Romantic comedy, drama, and teen movies.

Favorite movies: First Knight, Circle of Friends, Emma, Shine, Titanic, 'burbs, She's All That, 10 Things I Hate About You, Never Been Kissed, Ghostbusters II, Romeo and Juliet, Love Actually, Notebook, Pride & Prejudice, Twilight, Little Women, Gone with the Wind, Billy Elliot, What Women Want, Clueless, First Wives Club, Big Daddy, Sweet Home Alabama, Four Weddings and a Funeral, So I Married an Axe Murderer, Groundhog Day, Deep Impact, Splash, Miracle on 34th Street, Beavis and Butt-Head Do America, Karate Kid, Part II.

Favorite soundtracks: Titanic, The Bodyguard, Grease, Dirty Dancing, and The Lion King.

Favorite musicals: The Sound of Music, West Side Story, and Chicago.

Favorite books: Pride and Prejudice, Jane Eyre, Wuthering Heights, and The Notebook.

Hobbies: Yoga, reading, hiking, and baking.

Relationship status: Married with two children.

I hope this user profile fits your needs! Let me know if you have any further questions.

['Strictly Sexual (2008)', 'On the Beach (1959)', 'Man from Snowy River, The (1982)', 'Thief (1981)', 'Glory Road (2006)', "Adam's Rib (1949)", 'Frozen River (2008)', 'Saving Face (2004)', 'Mr. Skeffington (1944)', '12 Angry Men (1997)']

LLM Response for Recommendations Ranking:

Based on the user's interest profile, here's a ranking of the recommended movies from most relevant to least relevant:

1. Frozen River (2008) - This movie is a drama about two women who become involved in smuggling immigrants across the US-Canada border. It aligns well with the user's interest in intense/suspenseful movies and dramas.

2. 12 Angry Men (1997) - This movie is a drama about a jury deliberating the fate of a young man accused of murder. It aligns with the user's interest in dramas and intense/suspenseful movies.

3. Glory Road (2006) - This movie is a sports drama about a basketball coach who leads a team of African American players to victory. It aligns with the user's interest in sports movies and dramas.

4. Saving Face (2004) - This movie is a romantic comedy-drama about a young woman who falls in love with a woman after being dumped by her boyfriend. It aligns with the user's interest in romantic movies and LGBTQ+ themes.

5. Adam's Rib (1949) - This movie is a romantic comedy-drama about a lawyer who takes on a case involving a woman who shot her husband. It aligns with the user's interest in romantic movies and dramas.

6. Mr. Skeffington (1944) - This movie is a drama about a woman who falls in love with a wealthy man and must navigate his family's disapproval. It aligns with the user's interest in dramas and romantic movies.

7. Strictly Sexual (2008) - This movie is a romantic comedy-drama about two women who enter into a sexual relationship. It aligns with the user's interest in LGBTQ+ themes and romantic movies.

8. Thief (1981) - This movie is a crime drama about a professional thief who takes on an apprentice. It aligns with the user's interest in crime/gangster movies and intense/suspenseful movies.

9. On the Beach (1959) - This movie is a drama about a group of people who survive a nuclear apocalypse. It aligns with the user's interest in intense/suspenseful movies and dramas.

10. Man from Snowy River, The (1982) - This movie is an adventure drama about a young man who helps a group of people navigate the Australian outback. It aligns with the user's interest in adventure movies and dramas.

Note that the ranking is not absolute and may vary based on individual preferences and mood.

Summary

This blog aims to revolutionise the process of user recommendations by employing advanced natural language processing capabilities. By crafting prompts that encapsulate a user's liked movies, LLMs generate rich user profiles, capturing nuanced tastes and preferences. These profiles serve as valuable inputs for recommendation models, enabling personalised and context-aware movie suggestions. Moreover, LLMs excel in ranking recommended movies based on user interest profiles, delivering tailored recommendations from a vast pool of options.

The seamless interaction between users and recommendation systems facilitated by LLMs holds immense promise across various domains. From e-commerce platforms to streaming services, the ability to generate accurate user profiles and provide finely-tuned recommendations enhances user engagement and satisfaction.

As recommendation systems continue to evolve, harnessing the power of LLMs emerges as a cornerstone for delivering highly personalised and contextually relevant recommendations in today's data-driven landscape.

For more amazing papers and resources regarding LLM based recommendations, refer to the below Github repository:

Most importantly, please subscribe to our newsletter! It contains the latest updates and insights happening in the world of AI!

Stay tuned for more!