Learn about Deep Belief Network (DBNs)

Introduction

Greetings present & future Data-Scientists! If you want to learn Deep Belief Network in a comprehensive yet simple way, you’re in right place. You’re going to do just fine with the knowledge of the deep belief network in this article.

This is an era of artificial intelligence. We encounter AI (Artificial Intelligence) in our everyday life. If we talk about machine learning, AI plays a precarious role in taming the complexity of growing IT networks.

No doubt, we’ve gotten so far with the help of AI but still, we encounter many problems in standard artificial neural networks. Now the question arises, which network in machine learning solves the problems in standard artificial neural networks?

You got it right, it's Deep Belief Network.

What are Deep Belief Networks?

Now, let’s find out what Deep Belief Networks really are. Deep Belief Networks (DBNs) were invented as a solution for the problems encountered when using traditional neural networks training in deep layered networks, such as slow learning, becoming stuck in local minima due to poor parameter selection, and requiring a lot of training datasets.

DBNs were initially introduced in (Larochelle, Erhan, Courville, Bergstra, & Bengio, 2007) as probabilistic generative models to provide an alternative to the discriminative nature of traditional neural nets.

Generative models provide a joint probability distribution over input data and labels, facilitating the estimation of both P(x|y) and P(y|x), while discriminative models only use the latter model P(y|x).

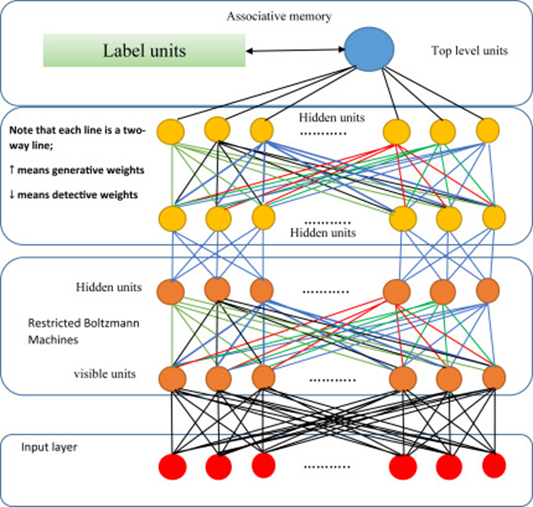

DBNs consists of several layers of neural networks, also known as “Boltzmann Machines”. Let’s have a look at the figure below.

We can see that each of the Boltzmann machines is restricted to a single visible layer and a hidden layer.

We can also explain the deep belief network as a stack of restricted Boltzmann Machines. In this, each layer of Restricted Boltzmann Machine (RBM) interconnects with previous and ensuing layers both. It means nodes of a single layer will not communicate with each other horizontally.

Boltzmann Machine

Let’s have a short overview of what the Boltzmann Machine is. Boltzmann Machine is an integral part of mechanics having statistics and helps to get to understand the impact of parameters like Temperature and Entropy in the quantum states in the domain of Thermodynamics.

Boltzmann Machines are really special. Now you’d be wondering, what makes Boltzmann Machines so special? Don’t worry, we’re just going to find out.

Almost all of the other machines use Stochastic Gradient Descent for the patterns to be learned and optimized but Boltzmann Champ learns patterns without Stochastic Gradient Descent. We don’t have typical 1 or 0 type output in Boltzmann Machine but still we get to figure out the patterns.

This, my friend, makes it special.

Restricted Boltzmann Machine

Restricted Boltzmann Machines (RBMs) can be considered as a binary version of factor analysis. So instead of having many factors, a binary variable will determine the network output. The widespread RBNs allows for more efficient training of the generative weights of its hidden units. These hidden units are trained to capture higher-order data correlations that are observed in the visible units.

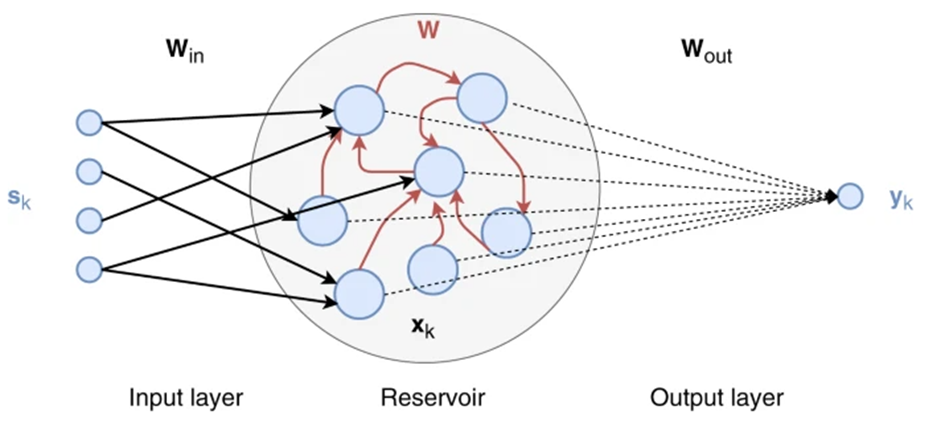

The generative weights are obtained using an unsupervised greedy layer-by-layer method, enabled by contrastive divergence (Hinton, 2002). The RBN training process, known as Gibbs sampling, starts by presenting a vector, v, to the visible units that forward values to the hidden units. In the reverse direction, the visible unit inputs are stochastically found to reconstruct the original input. Finally, these new visible neuron activations are forwarded so single-step reconstruction of hidden unit activations, h, can be attained.

RBM is like BM just with a small difference. In a Restricted Boltzmann Machine, there are no connections between nodes within a group i.e. visible and hidden. This makes it easy to be implemented and trained.

To be more clear, RBM has fewer connections.

How do we create a Deep Belief Network?

This network becomes more interesting once you get to know the process of its creation. Let’s dig into the creation of the Deep Belief Network.

Each record type contains the RBMs (Restricted Boltzmann Machine) that make up the layers of the network, as well as a vector reflecting the size of the layers, and in the case of the classification DBN, the number of classes in the representative dataset.

The DBN record reflects a model that is nothing more than stacked RBMs, but the CDBN record contains the top-level associative memory that is actually a CRBM record. This allows for training the top layer to generate class labels corresponding to the input data vector and to classify unknown data vectors.

Learning a Deep Belief Network Training, a DBN is greatly simplified by the fact that it’s composed of RBMs that are trained in an unsupervised manner. RBM training time dominates the overall DBN training time but makes for simple code.

CDBNs ( Convolutional Deep Belief Networks) require the observation labels to be available during the training of the top layer, so a training session involves first training the bottom layer, propagating the dataset through the learned RBM, and then using that new transformed dataset as the training data for the next RBM.

This continues until the dataset has been propagated through the penultimate trained RBM, where the labels are concatenated with the transformed dataset and used to train the top-layer associative memory.

Benefits & Drawbacks of Deep Belief Networks

Let's discuss some benefits and drawbacks of DBNs.

Benefits

- DBN is efficient in the usage of hidden layers (higher performance gain by adding layers compared to Multilayer perceptron).

- DBN has specific robustness in classification (size, position, color, view angle – rotation).

- DBN’s same neural network approach can be implemented on various applications and data types.

- Even when the amount of data is huge, DBN provides the best performance results.

- DBN’s features are habitually gathered & optimally tuned for anticipated outcomes.

- DBN avoids time taking techniques.

- Last but not least, DBN is flexible to new problems in the future.

Drawbacks

- DBN has hardware requirements.

- DBN requires huge data to perform better techniques.

- DBN is expensive to train because it has complex data models.

- Hundreds of machines are required.

- DBN is difficult to be used by less skilled people.

- DBN requires classifiers to grasp the output.

Comparison between DBN & DBM

We’ve discussed the deep belief network and the Boltzmann machine. Now let’s compare them and see which one is more accessible.

Connections between layers are directed in Deep Belief Network and on the other side, connections between all layers are undirected.

That’s why the first two layers form a Restricted Boltzmann Machine of Deep Belief Network and then the succeeding layers form a directed generative model.

Just like that, each pair of layers form an RBM in a Deep Boltzmann Machine.

DBM’s approximate interference procedure uses top-down feedback as well as a general bottom-up pass. This makes Deep Boltzmann Machines integrate uncertainty about vague input.

On the other hand, DBN (Deep Belief Network) provides approximate interference truly based on a mean-field approach. This is a slow process as compared to a single bottom-up pass because mean-field interference has to be executed for every new test input.

Applications of Deep Belief Network

DBN has many real-life applications. We’re going to take a look at some of them.

- DBN is used in Object Detection. DBN detects occurrences of objects of a certain class like airplanes, birds and humans.

- DBN is also used in Image generation.

- DBN is used in classification of images.

- DBN also recognizes video.

- It captures the motion.

- DBN understands natural language i.e. speech processing for detailed description.

- DBN estimates human poses.

- DBN is vastly used in datasets.

End Notes

In the article above, we briefly discussed the Deep Belief Network. This definitely a good place to learn all about DBNs.

For more such articles to be delivered to your inbox - subscribe!

If you are looking for jobs in AI and DS check out Deep Learning Careers